Reframing the Replication Crisis as a Crisis of Inference

Abstract

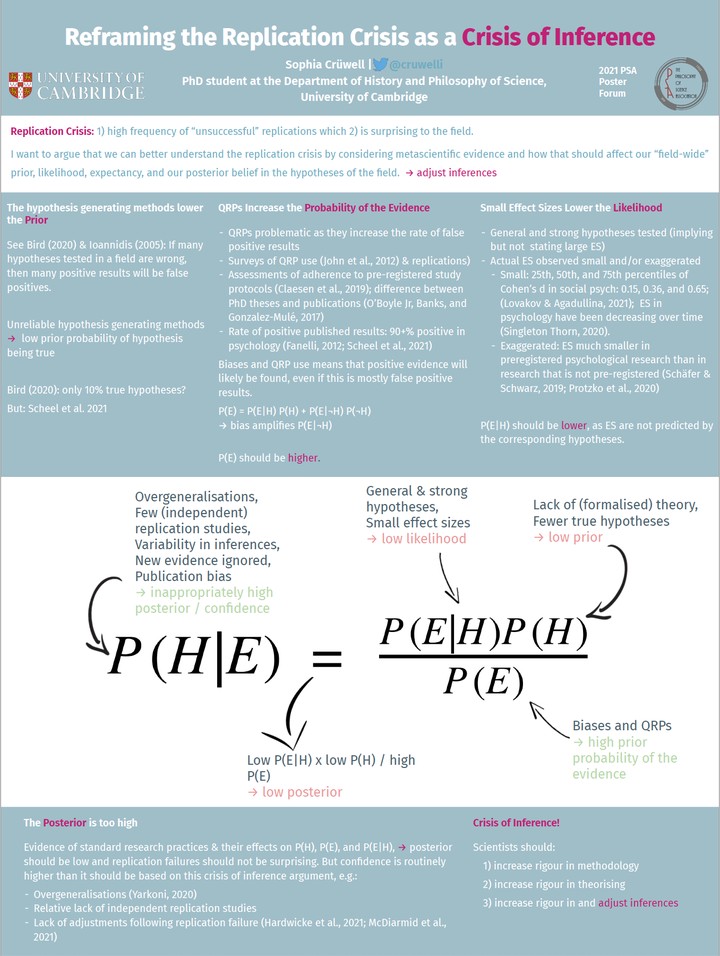

The replication crisis describes a phenomenon in many empirical sciences, most famously psychology, in which several large-scale replication projects were and are unable to replicate the original studies' results at all or with much lower or reversed effect sizes. The cause for this crisis is largely seen in the intentional or unintentional misuse of statistical methods, combined with several cognitive and external biases. A further path to explaining or even fully accounting for field-wide replication failures is to consider the possibility of a low prior probability (or base rate) of true hypotheses in the field: if most or a substantial proportion of hypotheses that are tested in a field are in fact wrong, then a substantial proportion of positive results will be false positives. The base rate of true positive results as a cause for replication failure has previously been considered by Bird (2020) and Ioannidis (2005). While taking into account the prior probability of a field’s hypotheses is important for understanding the replication crisis, the effects of biases, publication pressures, fraud, underpowered studies, overgeneralisations and a lack of formal theorising are undeniable. In this paper, I aim to give a better overall picture of the replication crisis by combining these explanations. To do so, I will give an extended Bayesian account of the replication crisis that centres the posterior probability of the hypothesis, i.e. the inference we make. I will take empirical evidence from the replication crisis, put this into the context of a Bayesian framework, and consider implications that follow from this. Specifically, I will argue that, in relevant areas of psychological research, the prior probability of the hypotheses is likely lower than generally thought, the likelihood of the evidence given the hypothesis is low due to small effect sizes, and the marginal probability of the evidence is artificially large due to questionable research practices and biases. Our posterior belief in the hypotheses in many areas of psychology tested using standard research practices should therefore be weak when it is currently seemingly strong. Once we adapt our inferences accordingly, the large scale replication failures seen in e.g. social psychology will not be surprising anymore. I will conclude that, seen through an explicitly Bayesian framework, the replication crisis is better understood as a crisis of inference. There are several upshots of this reframing of the replication crisis. Crucially, the first step to solving the crisis of inference is not to solve all the major problems in measurement, theorising, and so on. Instead, psychologists could keep doing the same kind of research, as long as we adjust our inferences. Furthermore, it should be possible to accommodate not just the replication crisis, but also the other proposed crises – measurement, theory, generalisability – under the umbrella of a crisis of inference. It may seem trivial that these issues are connected, but in the context of an increasingly political debate surrounding the replication crisis, centering replication when inference is the problem will continue to lead us astray when trying to find solutions.